GenNard

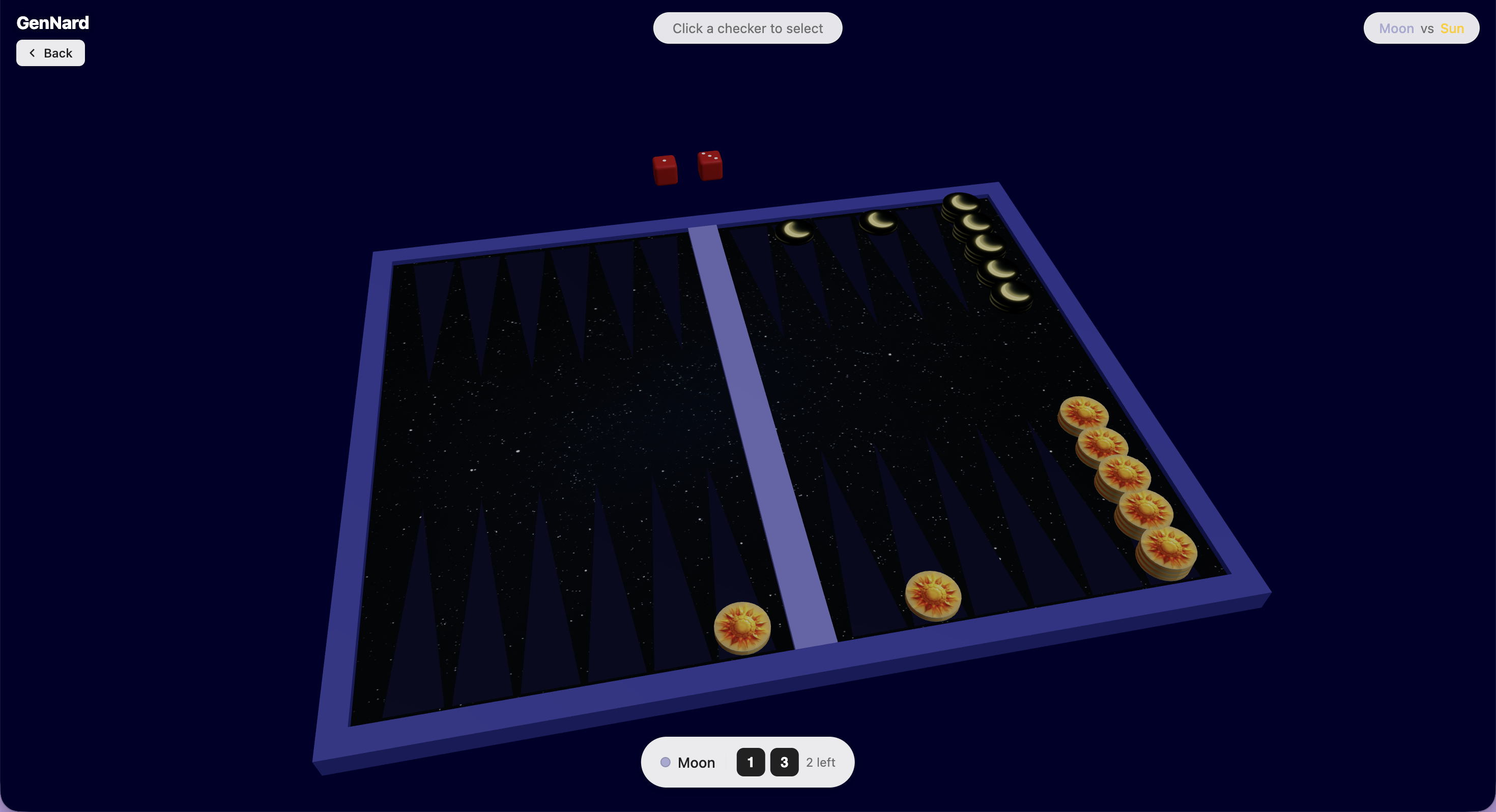

Baggammon game where users can generate themed checkers and a board.

From Text Prompt to 3D Backgammon Board: How the AI Pipeline Works

You type “moon vs sun” into a text box. About thirty seconds later, you're moving crescent-moon checkers across a starry night board against golden sun discs. No image uploads, no manual configuration — just one string of text and the game re-skins itself. Here's exactly how that works under the hood.

The Two-Step AI Pipeline

When you hit Generate, the client fires a single POST /api/generate-theme with two fields: your prompt and a styleMode (classic or creative). That's it. Everything else happens on the server.

The first thing the server does is not call an AI model. It hashes the request — SHA-256(prompt|styleMode).slice(0, 16) — and checks if a file already exists at generated/<hash>/theme.json. If it does, the response goes back immediately with zero AI cost. Same prompt, same result, instant return.

If it's a cache miss, the server spins up the two-step pipeline:

First the LLM doesn't draw anything, it plans. Qwen-72B receives your raw prompt and its only job is to output a structured JSON object: a name and description for each player's theme, three image generation prompts (one per texture), and ten hex color values for the board UI. That's it. No images, just a plan. Text models are genuinely good at this kind of structured extraction — much better than asking an image model to also figure out the color palette.

After that three images, fired in parallel. The server takes those three image prompts and sends them simultaneously to FLUX.1-schnell (via HuggingFace). One call for player 1's checker, one for player 2's, one for the board texture. Because they run in parallel rather than sequentially, the total wait time is roughly the slowest single call — not three times longer.

Each image comes back as a base64 data URL. The entire response — two checker textures, one board texture, ten colors, metadata — is one self-contained JSON object. Nothing is stored on a file server. Nothing requires a second fetch.

From JSON Response to 3D Scene

The server response lands on the client as a single TypeScript interface called ThemeGenerationResponse, defined in shared/ so both sides agree on the shape without duplicating types.

On the client, a Zustand store acts as a simple state machine: idle → loading → ready → error. The LandingPage calls generate() and the store flips to loading. When the response arrives, it flips to ready. The GamePage watches the store and only renders the 3D board once status is ready — clean, no prop-drilling, no component talking directly to the API.

The interesting part is useTextureLoader. Three.js normally wants a URL it can fetch — but the response already contains the full image data as base64 data URLs. The hook feeds those directly into THREE.TextureLoader, which handles base64 just fine. The result is THREE.Texture objects that get passed as props into the scene components. GPU receives the textures, Checker3D and Board3D apply them to their materials, and what the AI described in text is now physically rendered geometry.

One detail worth noting: the hook cleans up on unmount — texture.dispose() — so you're not leaking GPU memory every time a user navigates away and back.

The Orchestrator Pattern

The AI integration sits behind a simple interface. The active provider is controlled by a single environment variable — THEME_PROVIDER — so swapping models requires no route changes and no client changes.

This works because both the HuggingFace orchestrator and any alternative implement the same interface:

interface IOrchestrator {

generate(prompt: string, styleMode: StyleMode): Promise<ThemeGenerationResponse>

}The entry point picks one at startup and the rest of the app never needs to know which it got. This is useful beyond just switching providers — you can point THEME_PROVIDER at a cheaper model in production and a more capable one for testing, without touching any application logic.

The Game Engine Is Deliberately Ignorant of All This

While the AI pipeline is async, non-deterministic, and talks to external services, the game engine is the exact opposite. It's a standalone TypeScript package with zero runtime dependencies. Every function is pure — same input always produces the same output. The core is a single reducer:

gameReducer(state, action) → state

The engine has no idea textures exist. The AI pipeline has no idea backgammon exists. They share no code. This separation is what makes both halves easy to reason about — the unpredictable part (AI) and the deterministic part (game logic) never get tangled together.

Deployment: One Routing Trick Worth Stealing

The client lives on Firebase Hosting (a CDN), the server lives on Cloud Run. Different domains — which normally means CORS headaches. Instead of configuring CORS headers, Firebase has a rewrite rule:

{ "source": "/api/**", "run": { "serviceId": "gennard-server" } }From the browser's perspective, everything is the same origin. /api/generate-theme hits Firebase, Firebase proxies it to Cloud Run. No CORS config, no hardcoded backend URL in the client bundle.

The Cloud Run service runs with min-instances=1, meaning there's always at least one warm container. AI calls already take 10–30 seconds — adding a cold-start on top of that would make the product feel broken. Keeping one instance alive is cheap compared to the UX cost.

Secrets (HF_TOKEN, THEME_PROVIDER) are injected at runtime by Google Secret Manager, never baked into the Docker image or committed to the repo.